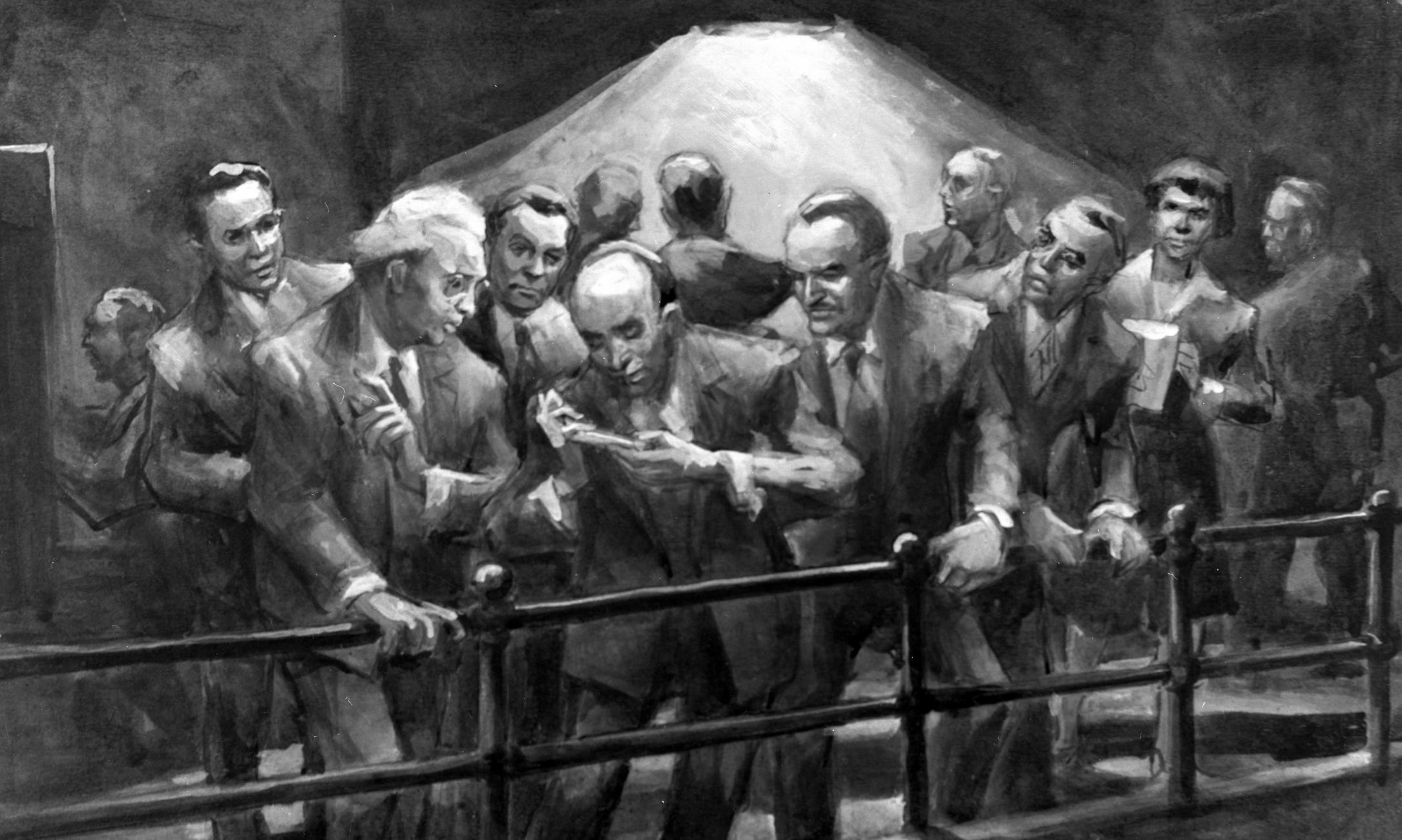

John Cadel painting recreating the Chicago Pile-1 experiment. (Image courtesy Argonne National Laboratory)

The University marks the 75th anniversary of Chicago Pile-1, the world’s first controlled, self-sustaining nuclear reaction.

On December 2, 1942, in an abandoned squash court underneath the former Stagg Field, where Mansueto Library and Henry Moore’s Nuclear Energy sculpture now stand, a team of 49 scientists and workers gathered on a balcony intended for spectators and stared at a 20-foot pile of bricks below.

Chicago Pile-1, or CP-1 for short, weighed more than 400 tons and consisted of layers of solid graphite bricks alternating with bricks embedded with uranium metal and uranium oxide, braced by a wooden frame—nearly $39 million worth of materials in today’s dollars. Within the pile, these components were reacting in ways invisible to the naked eye. As the radioactive uranium naturally decayed, it released fast neutrons through spontaneous nuclear fission. The graphite served as a moderator, slowing the released neutrons enough to be captured by other uranium nuclei, which normally would induce more fission in a chain reaction.

But the physicists had prevented such a chain reaction by inserting cadmium rods into the side of the pile to absorb the slowed neutrons. The rods, arranged in three redundant safety systems, were stopping—and controlling—a nuclear chain reaction. The main system, which included 10 rods—any one of which would stop the reaction—was manually inserted and retracted. A second system included two electronically operated fail-safe rods, programmed to automatically deploy if neutron intensity rose above a set safety point. And the last system was an emergency rod attached to a rope, to be cut in the event of a crisis. What would happen once the physicists removed the cadmium rods? Would the reaction peter out, or never even start? Would the pile explode?

Enrico Fermi, the Nobel Prize–winning physicist who led the experiment and would later join the UChicago faculty, was confident in his calculations. He assured fellow Nobelist Arthur Holly Compton, the UChicago physicist who directed the Metallurgical Laboratory, which conducted the experiment and later became Argonne National Laboratory, that the pile would produce no more energy than could power a lightbulb. Before the experiment, a colleague asked Fermi what he would do if anything went wrong. He replied, “I will walk away—leisurely.”

On the morning of the experiment, Fermi ordered all rods removed, save for one of the manually operated rods. The emergency rod was hoisted on its rope, monitored by Norman Hilberry, Compton’s right-hand man. On the squash court floor, physicist George Weil operated the remaining main rod that served as a starter, accelerator, and brake. Weil slowly removed it over several hours as Fermi monitored the clicking neutron counter.

At 11:35 a.m. there was a loud clap. One of the automatic safety rods had slammed into the pile; the safety point had been set too low, triggering the mechanism (and a lunch break).

At 2:30 p.m. the experiment resumed. Weil again pulled out the remaining main rod in a series of measured increments, and the neutron intensity rose at a steadily increasing rate. Fermi ran calculations on his slide rule before announcing, “The reaction is self-sustaining. The curve is exponential.” The rods were replaced at 3:53 p.m. to end the reaction. Quiet applause rippled through the audience.

The experiment succeeded: CP-1 became the first reactor to go critical, or maintain a controlled, self-sustaining nuclear reaction, producing half a watt of power. This “birth of the atomic age” formed the basis for decades of technological innovation that would change the course of human history.

CP-1 has a complicated legacy. The experiment was a cornerstone of the Manhattan Project, the Allied effort to develop nuclear weapons during World War II. The Germans “were advancing everywhere, they were conquering everywhere, and they were working on an atomic bomb,” said Roger Hildebrand, the Samuel K. Allison Distinguished Service Professor Emeritus in Physics, in a 2012 interview. “The consequence of losing a nuclear race was the preoccupation of everyone who knew that a nuclear bomb might be possible.”

Less than three years after CP-1 went critical, the United States dropped atomic bombs on the Japanese cities of Hiroshima and Nagasaki, killing at least 129,000 people—mostly civilians. The war, which had already claimed 50 million lives, was over within a month.

The development and use of nuclear weapons, as well as the potential for both beneficial and destructive technologies based on CP-1’s success, were not taken lightly. In June 1945, two months before Hiroshima and Nagasaki, a group of Manhattan Project scientists, led by UChicago physical chemistry professor and Nobelist James Franck, released The Franck Report, warning of an impending nuclear armaments race and unsuccessfully advocating for a demonstration of power by dropping an atomic bomb on an uninhabited area.

The same month the war ended, September 1945, another group of Manhattan Project scientists and UChicago professors, who “could not remain aloof to the consequences of their work,” established the Bulletin of the Atomic Scientists and a magazine of the same name. Its early years, according to the Bulletin’s mission statement, chronicled the “dawn of the nuclear age and the birth of the scientists’ movement, as told by the men and women who built the atomic bomb and then lobbied with both technical and humanist arguments for its abolition.”

Still active today, the Bulletin informs science leaders, policy makers, and the public about nuclear weapons and disarmament, the changing energy landscape, climate change, and emerging technologies. The Bulletin of Atomic Scientists is the organization behind the annually reevaluated Doomsday Clock, set in 2017 at 2.5 minutes to midnight—the apocalypse. The clock symbolizes the world’s vulnerability to nuclear technologies, measured by the scientists who develop them.

To mark the 75th anniversary of Chicago Pile-1 and to address its far-reaching influence, the University of Chicago will hold lectures, seminars, workshops, multimedia presentations, music performances, and exhibitions throughout the fall quarter. The series of public events, titled Nuclear Reactions—1942: A Historic Breakthrough, an Uncertain Future, will begin in September and culminate in a two-day program on campus December 1 and 2.

Harnessing the atom

The ability to understand, harness, and control atomic energy, which has developed as a result of CP-1, has led to technological applications in nearly every aspect of modern life.

Agriculture

Radiation is used to prevent food spoilage and to induce mutations that yield stronger, more productive crops. Radiation is also used as a form of pest control; insects are sterilized and released into the wild, diluting the fertility of the local species.

Water

Nuclear isotopes reveal important information about the quality and availability of water sources, both above and below ground. Such techniques help countries identify, manage, and conserve this essential but diminishing natural resource.

Medicine

Nuclear techniques play an important role in many areas of modern medicine, including X-rays, equipment sterilization, and nutrient-uptake monitoring. Radiation treatment is a crucial method for cancer treatment, pioneered by UChicago scientists in the early 1950s.

Household

Smoke detectors, which use radiation to detect the presence of smoke in the air, are the most common household use of nuclear technology. Radiation is also used to produce nonstick pans and glow-in-the-dark watches and clocks (now made with man-made nontoxic tritium).

Industry

Manufacturers use nuclear techniques to assess, measure, and manipulate production materials. Radiotracers—chemical compounds in which one or more atoms have been replaced with a radioisotope—can literally and figuratively illuminate the way substances behave and interact.

Energy

Nuclear energy, a leading contender in the search for a sustainable alternative to carbon-emitting fossil fuels, accounted for 60 percent of low-emission power generation in the United States in 2016, according to the Nuclear Energy Institute.

Space

Nuclear technology is used as a light, sustainable source of heat and energy in space exploration, powering missions including the Voyager space probes, the New Horizons mission to Pluto, and Mars rovers Spirit, Opportunity, and Curiosity.